Are you looking to Enroll in course?

Are you ready to take your skills to the next level? Enroll in our course today and unlock your full potential

Live Classes: Upskill your knowledge Now!

Chat NowPublished - Tue, 06 Dec 2022

A list of mostly asked software testing interview questions or QTP interview questions and answers are given below.

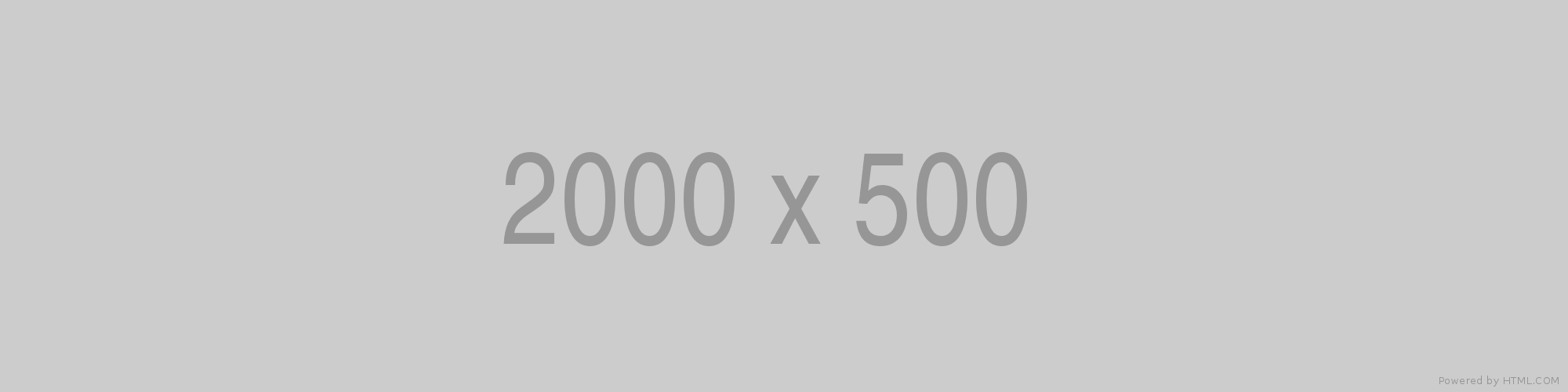

There are four steps in a normal software development process. In short, these steps are referred to as PDCA.

PDCA stands for Plan, Do, Check, Act.

The developers do the "planning and building" of the project while testers do the "check" part of the project.

Black box Testing: The strategy of black box testing is based on requirements and specification. It requires no need of knowledge of internal path, structure or implementation of the software being tested.

White box Testing: White box testing is based on internal paths, code structure, and implementation of the software being tested. It requires a full and detail programming skill.

Gray box Testing: This is another type of testing in which we look into the box which is being tested, It is done only to understand how it has been implemented. After that, we close the box and use the black box testing.

Following are the differences among white box, black box, and gray box testing are:

| Black box testing | Gray box testing | White box testing |

|---|---|---|

| Black box testing does not need the implementation knowledge of a program. | Gray box testing knows the limited knowledge of an internal program. | In white box testing, implementation details of a program are fully required. |

| It has a low granularity. | It has a medium granularity. | It has a high granularity. |

| It is also known as opaque box testing, closed box testing, input-output testing, data-driven testing, behavioral testing and functional testing. | It is also known as translucent testing. | It is also known as glass box testing, clear box testing. |

| It is a user acceptance testing, i.e., it is done by end users. | It is also a user acceptance testing. | Testers and programmers mainly do it. |

| Test cases are made by the functional specifications as internal details are not known. | Test cases are made by the internal details of a program. | Test cases are made by the internal details of a program. |

Designing tests early in the life cycle prevent defects from being in the main code.

There are three types of defects: Wrong, missing, and extra.

Wrong: These defects are occurred due to requirements have been implemented incorrectly.

Missing: It is used to specify the missing things, i.e., a specification was not implemented, or the requirement of the customer was not appropriately noted.

Extra: This is an extra facility incorporated into the product that was not given by the end customer. It is always a variance from the specification but may be an attribute that was desired by the customer. However, it is considered as a defect because of the variance from the user requirements.

Simultaneous test design and execution against an application is called exploratory testing. In this testing, the tester uses his domain knowledge and testing experience to predict where and under what conditions the system might behave unexpectedly.

Exploratory testing is performed as a final check before the software is released. It is a complementary activity to automated regression testing.

It helps you to prevent defects in the code.

The risk-based testing is a testing strategy that is based on prioritizing tests by risks. It is based on a detailed risk analysis approach which categorizes the risks by their priority. Highest priority risks are resolved first.

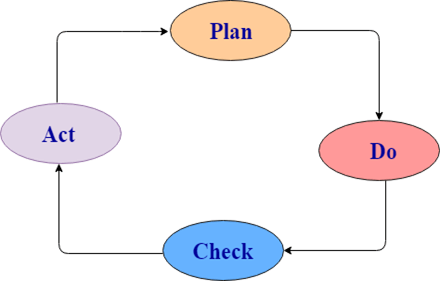

Acceptance testing is done to enable a user/customer to determine whether to accept a software product. It also validates whether the software follows a set of agreed acceptance criteria. In this level, the system is tested for the user acceptability.

Types of acceptance testing are:

Accessibility testing is used to verify whether a software product is accessible to the people having disabilities (deaf, blind, mentally disabled etc.).

Ad-hoc testing is a testing phase where the tester tries to 'break' the system by randomly trying the system's functionality.

Agile testing is a testing practice that uses agile methodologies i.e. follow test-first design paradigm.

Application Programming Interface is a formalized set of software calls and routines that can be referenced by an application program to access supporting system or network services.

Testing by using software tools which execute test without manual intervention is known as automated testing. Automated testing can be used in GUI, performance, API, etc.

The Bottom-up testing is a testing approach which follows integration testing where the lowest level components are tested first, after that the higher level components are tested. The process is repeated until the testing of the top-level component.

In Baseline testing, a set of tests is run to capture performance information. Baseline testing improves the performance and capabilities of the application by using the information collected and make the changes in the application. Baseline compares the present performance of the application with its previous performance.

Benchmarking testing is the process of comparing application performance with respect to the industry standard given by some other organization.

It is a standard testing which specifies where our application stands with respect to others.

There are two types of testing which are very important for web testing:

The difference between a web application and desktop application is that a web application is open to the world with potentially many users accessing the application simultaneously at various times, so load testing and stress testing are important. Web applications are also prone to all forms of attacks, mostly DDOS, so security testing is also very important in the case of web applications.

Difference between verification and validation:

| Verification | Validation |

|---|---|

| Verification is Static Testing. | Validation is Dynamic Testing. |

| Verification occurs before Validation. | Validation occurs after Verification. |

| Verification evaluates plans, document, requirements and specification. | Validation evaluates products. |

| In verification, inputs are the checklist, issues list, walkthroughs, and inspection. | Invalidation testing, the actual product is tested. |

| Verification output is a set of document, plans, specification and requirement documents. | Invalidation actual product is output. |

A list of differences between Retesting and Regression Testing:

| Regression | Retesting |

|---|---|

| Regression is a type of software testing that checks the code change does not affect the current features and functions of an application. | Retesting is the process of testing that checks the test cases which were failed in the final execution. |

| The main purpose of regression testing is that the changes made to the code should not affect the existing functionalities. | Retesting is applied on the defect fixes. |

| Defect verification is not an element of Regression testing. | Defect verification is an element of regression testing. |

| Automation can be performed for regression testing while manual testing could be expensive and time-consuming. | Automation cannot be performed for Retesting. |

| Regression testing is also known as generic testing. | Retesting is also known as planned testing. |

| Regression testing concern with executing test cases that was passed in earlier builds. Retesting concern with executing those test cases that are failed earlier. | Regression testing can be performed in parallel with the retesting. Priority of retesting is higher than the regression testing. |

Preventative tests are designed earlier, and reactive tests are designed after the software has been produced.

The exit criteria are used to define the completion of the test level.

A decision table consists of inputs in a column with the outputs in the same column but below the inputs.

The decision table testing is used for testing systems for which the specification takes the form of rules or cause-effect combination. The reminders you get in the table explore combinations of inputs to define the output produced.

These are the key differences between alpha and beta testing:

| No. | Alpha Testing | Beta Testing |

|---|---|---|

| 1) | It is always done by developers at the software development site. | It is always performed by customers at their site. |

| 2) | It is also performed by Independent testing team | It is not be performed by Independent testing team |

| 3) | It is not open to the market and public. | It is open to the market and public. |

| 4) | It is always performed in a virtual environment. | It is always performed in a real-time environment. |

| 5) | It is used for software applications and projects. | It is used for software products. |

| 6) | It follows the category of both white box testing and Black Box Testing. | It is only the kind of Black Box Testing. |

| 7) | It is not known by any other name. | It is also known as field testing. |

Random testing is also known as monkey testing. In this testing, data is generated randomly often using a tool. The data is generated either using a tool or some automated mechanism.

Random testing has some limitations:

Negative Testing: When you put an invalid input and receive errors is known as negative testing.

Positive Testing: When you put in the valid input and expect some actions that are completed according to the specification is known as positive testing.

Test independence is very useful because it avoids author bias in defining effective tests.

In boundary value analysis/testing, we only test the exact boundaries rather than hitting in the middle. For example: If there is a bank application where you can withdraw a maximum of 25000 and a minimum of 100. So in boundary value testing we only test above the max and below the max. This covers all scenarios.

The following figure shows the boundary value testing for the above-discussed bank application.TC1 and TC2 are sufficient to test all conditions for the bank. TC3 and TC4 are duplicate/redundant test cases which do not add any value to the testing. So by applying proper boundary value fundamentals, we can avoid duplicate test cases, which do not add value to the testing.

There are many ways to test the login feature of a web application:

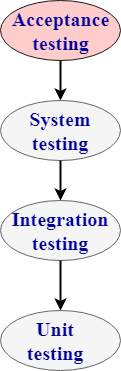

Performance testing: Performance testing is a testing technique which determines the performance of the system such as speed, scalability, and stability under various load conditions. The product undergoes the performance testing before it gets live in the market.

Types of software testing are:

1. Load testing:

2. Stress testing:

3. Spike testing:

4. Endurance testing:

5. Volume testing:

6. Scalability testing

| Basis of comparison | Functional testing | Non-functional testing |

|---|---|---|

| Description | Functional testing is a testing technique which checks that function of the application works under the requirement specification. | Non-functional testing checks all the non-functional aspects such as performance, usability, reliability, etc. |

| Execution | Functional testing is implemented before non-functional testing. | Non-functional testing is performed after functional testing. |

| Focus area | It depends on the customer requirements. | It depends on the customer expectations. |

| Requirement | Functional requirements can be easily defined. | Non-functional requirements cannot be easily defined. |

| Manual testing | Functional testing can be performed by manual testing. | Non-functional testing cannot be performed by manual testing. |

| Testing types | Following are the types of functional testing:

| Following are the types of non-functional testing:

|

| Static testing | Dynamic testing |

|---|---|

| Static testing is a white box testing technique which is done at the initial stage of the software development lifecycle. | Dynamic testing is a testing process which is done at the later stage of the software development lifecycle. |

| Static testing is performed before the code deployment. | Dynamic testing is performed after the code deployment. |

| It is implemented at the verification stage. | It is implemented at the validation stage. |

| Execution of code is not done during this type of testing. | Execution of code is necessary for the dynamic testing. |

| In the case of static testing, the checklist is made for the testing process. | In the case of dynamic testing, test cases are executed. |

| Positive testing | Negative testing |

|---|---|

| Positive testing means testing the application by providing valid data. | Negative testing means testing the application by providing the invalid data. |

| In case of positive testing, tester always checks the application for a valid set of values. | In the case of negative testing, tester always checks the application for the invalid set of values. |

| Positive testing is done by considering the positive point of view for example: checking the first name field by providing the value such as "Akshay". | Negative testing is done by considering the negative point of view for example: checking the first name field by providing the value such as "Akshay123". |

| It verifies the known set of test conditions. | It verifies the unknown set of conditions. |

| The positive testing checks the behavior of the system by providing the valid set of data. | The negative testing tests the behavior of the system by providing the invalid set of data. |

| The main purpose of the positive testing is to prove that the project works well according to the customer requirements. | The main purpose of the negative testing is to break the project by providing the invalid set of data. |

| The positive testing tries to prove that the project meets all the customer requirements. | The negative testing tries to prove that the project does not meet all the customer requirements. |

There are various models available in software testing, which are the following:

Following are the differences between smoke, sanity, and dry run testing:

| Smoke testing | Sanity testing | Dry-run testing |

|---|---|---|

| It is shallow, wide and scripted testing. | It is narrow and deep and unscripted testing | A dry run testing is a process where the effects of a possible failure are internally mitigated. |

| When the builds come, we will write the automation script and execute the scripts. So it will perform automatically. | It will perform manually. | For Example, An aerospace company may conduct a Dry run of a takeoff using a new aircraft and a runway before the first test flight. |

| It will take all the essential features and perform high-level testing. | It will take some significant features and perform in-depth testing. |

To test any web application such as Yahoo, Gmail, and so on, we will perform the following testing:

We might have developed the software in one platform, and the chances are there that users might use it in the different platforms. Hence, it could be possible that they may encounter some bugs and stop using the application, and the business might get affected. Therefore, we will perform one round of Compatibility testing.

We can tell anywhere between 2-5 test cases.

Primarily, we use to write 2-5 test cases, but in future stages we write around 6-7 because, at that time, we have the better product knowledge, we start re-using the test cases, and the experience on the product.

It would be around 7 test cases we write so that we can review 7*3=21 test cases. And we can say that 25-30 test case per day.

We can run around 30-55 test cases per day.

The correct answer is testing team is not good because sometimes the fundamentals of software testing define that no product has zero bugs.

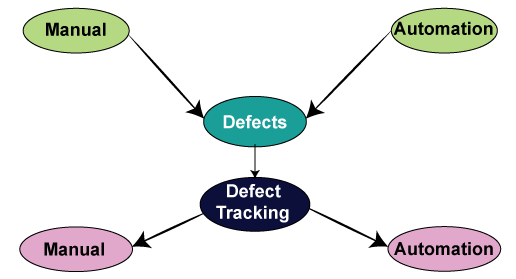

We can track the bug manually as:

Tracking the bug with the help of automation i.e., bug tracking tool:

We have various bug tracking tools available in the market, such as:

A product based: In the product based companies, they will use only one bug tracking tool.

Service-based: In service-based companies, they have many projects of different customers, and every project will have different bug tracking tools.

The software can have a bug for the following reasons:

We will perform testing whenever we need to check all requirements are executed correctly or not, and to make sure that we are delivering the right quality product.

We can stop testing whenever we have the following:

We can write test cases for the following types of testing:

| Different types of testing | Test cases |

|---|---|

| Smoke testing | In this, we will write only standard features; thus, we can pull out some test cases that have all the necessary functions. Therefore, we do not have to write a test case for smoke testing. |

| Functional/unit testing | Yes, we write the test case for unit testing. |

| Integration testing | Yes, we write the test case for integration testing. |

| System testing | Yes, we write the test case for system testing. |

| Acceptance testing | Yes, but here the customer may write the test case. |

| Compatibility testing | In this, we don't have to write the test case because the same test cases as above are used for testing on different platforms. |

| Adhoc testing | We don't write the test case for the Adhoc testing because there are some random scenarios or the ideas, which we used at the time of Adhoc time. Though, if we identify the critical bug, then we convert that scenario into a test case. |

| Performance testing | We might not write the test cases because we will perform this testing with the help of performance tools. |

| Usability testing | In this, we use the regular checklist; therefore, we don't write the test case because here we are only testing the look and feel of the application. |

| Accessibility testing | In accessibility testing, we also use the checklist. |

| Reliability testing | Here, we don't write the manual test cases as we are using the automation tool to perform reliability testing. |

| Regression testing | Yes, we write the test cases for functional, integration, and system testing. |

| Recovery testing | Yes, we write the test cases for recovery testing, and also check how the product recovers from the crash. |

| Security testing | Yes, we write the test case for security testing. |

| Globalization testing: Localization testing Internationalization testing | Yes, we write the test case for L10N testing. Yes, we write the test case for I18N testing. |

| Traceability matrix | Test case review |

|---|---|

| In this, we will make sure that each requirement has got at least one test case. | In this, we will check whether all the scenarios are covered for the particular requirements. |

Following are the significant differences between the use case and the test case:

| Test case | Use Case |

|---|---|

| It is a document describing the input, action, and expected response to control whether the application is working fine based on the customer requirements. | It is a detailed description of Customer Requirements. |

| It is derived from test scenarios, Use cases, and the SRS. | It is derived from BRS/SRS. |

| While developing test cases, we can also identify loopholes in the specifications. | A business analyst or QA Lead prepares it. |

We can perform both manual and automation testing. First, we will see how we perform manual testing:

| Different types of testing | Scenario |

|---|---|

| Smoke testing | Checks that basic functionality is written or not. |

| Functional/unit testing | Check that the Refill, pen body, pen cap, and pen size as per the requirement. |

| Integration testing | Combine pen and cap and integrate other different sizes and see whether they work fine. |

| Compatibility testing | Various surfaces, multiple environments, weather conditions, and keep it in oven and then write, keep it in the freezer and write, try and write on water. |

| Adhoc testing | Throw the pen down and start writing, keep it vertically up and write, write on the wall. |

| Performance testing | Test the writing speed of the pen. |

| Usability testing | Check whether the pen is user friendly or not, whether we can write it for more extended periods smoothly. |

| Accessibility testing | Handicapped people use them. |

| Reliability testing | Drop it down and write, and continuously write and see whether it leaks or not |

| Recovery testing | Throw it down and write. |

| Globalization testing Localization testing | Price should be standard, expiry date format. |

| Internationalize testing | Check whether the print on the pen is as per the country language. |

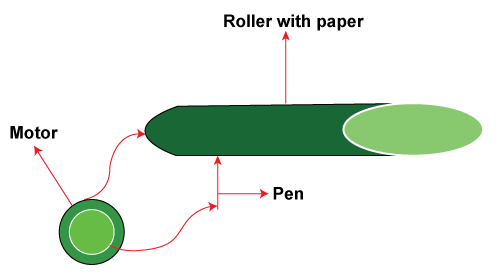

Now, we will see how we perform automation testing on a pen:

For this take a roller, now put some sheets of paper on the roller, then connects the pen to the motor and switch on the motor. The pen starts writing on the paper. Once the pen has stopped writing, now observe the number of lines that it has written on each page, length of each track, and multiplying all this, so we can get for how many kilometers the pen can write.

Fri, 16 Jun 2023

Fri, 16 Jun 2023

Fri, 16 Jun 2023

Write a public review